Hi folks. This time I thought of answering one of our readers (Danielle) question asked in the comments, in detail because you might also have faced this problem when there’s a clustered environment on your responsibility to maintain.

Below is the question asked by Daniel Bello.

“ I have a question: I tried to set a fence virtual device in a virtual environment, but it doesn’t work for me, in some part of my configuration the node doesn’t come back to the cluster after a failure. So i have added a quorum disk, and finally my cluster works ok (the node goes down and after the failure come back to the cluster), so my question is: what is the difference between a fence device and a quorum disk in a virtual environment?”

You can refer what a fencing device is by referring to our previous article series of Clustering below.

First let’s see what a Quorum disk is.

What is Quorum Disk?

A quorum disk is the storage type of cluster configurations. It acts like a database which holds the data related to clustered environment and duty of the quorum disk is to inform the cluster which node/nodes are to keep in ALIVE state. It allows concurrent access to it from all the other nodes to read/write data.

When the connectivity drops among the nodes (can be one node or more than one) quorum isolates the ones with no connection and keep the services up and running with the active nodes it has. It takes the nodes without connectivity out of service from the cluster.

Now let’s turn to the question. This looks like an environment which has 2 nodes and one has gone down. The situation Danielle faced seems like a “Fencing War” between the active two nodes.

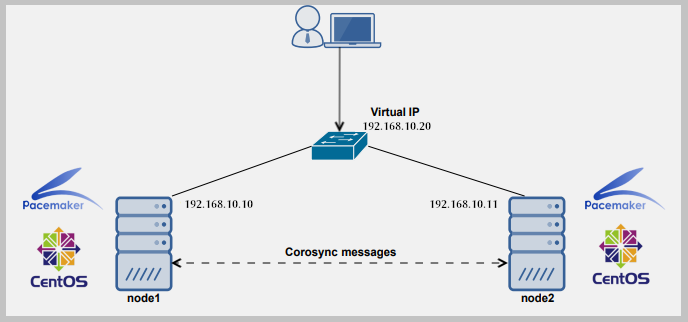

Consider there’s a clustered environment where there are no quorum disk added to the config. This cluster has 2 nodes and currently one node has failed. In this particular scenario, connectivity between node 1 and node 2 is completely lost.

Then node 1 sees node 2 has become failed because it cannot establish a connection to it and node 1 decides to fence node 2. In the same time node 2 sees node 1 has become failed because it cannot establish a connection to it and node 2 decides to fence node 1 as well.

Since node 1 has fenced the node 2 down, it takes over the services and resources which are clustered. Since there’s no quorum disk to verify this situation in node 2, and node 2 can restart all the services in the server without any connection to the node 1.

As I have mentioned earlier node 2 also fences node 1 because it cannot see any connection to node 1 from node 2 and what happens next is node 1 restarts all the services in the server because there is no quorum to check node 1’s state also.

This is identified as a Fencing War

Now this cycle will go on eternally until an engineer stops the services manually or servers are shut down or network connection is successfully established among the nodes. This is where a quorum disk comes to help. Voting process in quorum configs is the mechanism which prevents above cycle causing.

Summary:

- Clustered environments are used everywhere for the safety of data and services to give end users maximum uptime and live data experience.

- A fence device is used in clustered environments to isolate a node whose state is unknown to other nodes. Cluster will use fence device to automatically fence (remove) the failed node and keep the services up and running and start the failover over processes.

- A quorum disk is not essential to have in a clustered environment, but better to have one in a 2 node cluster to avoid fencing wars.

- It’s not a problem having a quorum disk in a cluster where there are more than 2 nodes but it’s less likely are the chances of happening a fencing war in a this particular environment. Hence, it’s less important to have a quorum disk in a 3 or more node cluster than a 2 node cluster.

- By the way it’s good to have a quorum disk in a multi node cluster environment, so that you can execute user customized health checks for among the nodes.

Important: Keep in mind that there is a limit you can add nodes to the quorum. You can add maximum of 16 nodes to it.

Hope you enjoyed the article. Keep in touch with tecmint for handier Linux tech guides.

1) When we have a 2 node cluster and 1 node fails. As intended, the quorum disk informs the cluster about the failed node which is now fenced. Does the quorum disk get the vote from the running node(only) for keeping the cluster up in a 2 node cluster ? If it is so, can this be called voting(in a democratic sense) or does it have a different technical name to it.

2) Can we mirror a quorum disk to achieve hardware redundancy ? Can we use 2 quorum disks – like master-slave ?…..

Thanks alot for your answer!!!

It was my first cluster config in my lab environment and is still working right.

Thanks again.

You’re welcome Daniel.

This scenario is also known as “Split Brain”, right ? (http://linux-ha.org/wiki/Split_Brain)

Yes you’re right. It’s the same thing :)