You’ve been running a long rsync job or a Python script on a remote server only to watch it die the moment your SSH session drops, and now you need to understand nohup, screen, tmux, and systemd to stop that from ever happening again.

You logged out for a second. Maybe your VPN dropped. Maybe your laptop lid closed. Either way, that 4-hour database export you were running is gone, and you’re starting from zero.

This happens because Linux ties every process you start in a terminal to that terminal’s session, and when the session ends, the kernel sends a SIGHUP (hangup signal) to everything running inside it, and your processes receive it and exit, by default.

The fix is not complicated once you understand what’s actually happening. Linux gives you 4 solid ways to decouple a process from your terminal session:

nohupfor quick one-off jobs.screenfor persistent terminal sessions, you can reattach.tmuxfor a more modern version of the same idea, and.systemdservices for anything that should survive reboots, too.

Each one fits a different situation, and knowing which to reach for saves you real time when it matters.

Why Processes Die When You Log Out

When you open a terminal and run a command, that command runs as a child process of your shell, and the shell is itself a child of the terminal session, which is controlled by a process called the session leader, usually the shell itself.

When you close an SSH connection or log out, the SSH daemon sends SIGHUP to the session leader, which then propagates the signal down to every process it spawned.

Most programs don’t handle SIGHUP at all, so they exit immediately when they receive it. That’s the default behavior in the Linux kernel, and it’s designed that way intentionally, because tying processes to sessions makes cleanup predictable.

The problem is that for long-running tasks, you actually want the opposite behavior: you want the process to keep running even after you’re gone.

Method 1: Keep Your Process Running with nohup

The nohup stands for “no hangup“, which is a simple wrapper that tells the kernel to ignore SIGHUP for the process you’re running, so any output that would normally go to the terminal gets redirected to a file called nohup.out in the current directory, so you don’t lose it.

The basic syntax is:

nohup your-command &

The & at the end sends the process to the background so you get your terminal prompt back immediately. Without it, nohup would still protect the process from SIGHUP, but you’d be stuck waiting for it to finish in the foreground.

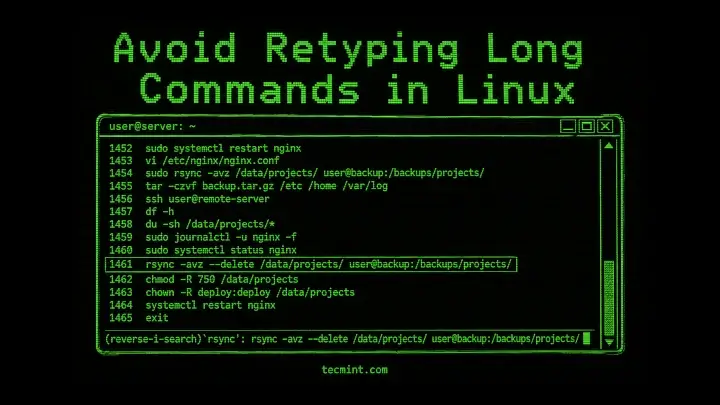

Here’s a real example: say you’re running a long rsync transfer:

nohup rsync -avz /data/backups/ [email protected]:/mnt/backups/ &

Output:

[1] 48271 nohup: ignoring input and appending output to 'nohup.out'

Linux prints the job number in square brackets ([1]) and the PID (48271). You can safely close the terminal now. To watch the progress, tail the output file:

tail -f nohup.out

Output:

sending incremental file list

backups/

backups/db-export-2025-04-30.sql

52,428,800 100% 48.23MB/s 0:00:01 (xfr#1, to-chk=3/5)

The -f flag on tail follows the file in real time, similar to watching a log. Press Ctrl+C to stop following without stopping the rsync job itself.

If you want to redirect output to a specific file instead of nohup.out, do this:

nohup rsync -avz /data/backups/ [email protected]:/mnt/backups/ > /tmp/rsync-progress.log 2>&1 &

> /tmp/rsync-progress.logredirects standard output to a file you name.2>&1merges standard error into standard output so errors go to the same file.&backgrounds the process.

Common mistake: Running nohup command without the & and then pressing Ctrl+C, thinking you’re sending it to the background, but you’re actually killing it, so always include the &.

Method 2: Keep Running Long Processes with screen

The screen is a terminal multiplexer that wraps your shell inside a persistent session managed by a separate process, that you can detach from it, log out entirely, come back later, reattach, and pick up exactly where you left off, because the process inside screen never sees SIGHUP because it’s not directly connected to your SSH session.

By default, screen is not installed on your system, so to install it, use the appropriate command for your Linux distribution.

sudo apt install screen [On Debian, Ubuntu and Mint] sudo dnf install screen [On RHEL/CentOS/Fedora and Rocky/AlmaLinux] sudo apk add screen [On Alpine Linux] sudo pacman -S screen [On Arch Linux] sudo zypper install screen [On OpenSUSE] sudo pkg install screen [On FreeBSD]

Start a Named Session

Always name your sessions, becuase it makes reattaching much easier when you have multiple jobs running:

screen -S db-export

Output:

[No output — you're now inside a new screen session]

You’re now inside a named screen session called db-export, now you can whatever command you want here:

python3 /opt/scripts/export-database.py

Detach Without Killing It

Press Ctrl+A, then D to detach, it will drop back to your original shell:

[detached from 49012.db-export]

The number 49012 is the PID of the screen session, where your script keeps running so that you can safely log out now.

List Active Sessions

To list running active sessions, run:

screen -ls

Output:

There is a screen on:

49012.db-export (Detached)

1 Socket in /run/screen/S-ravi.

Reattach to a Session

To reattach running active sessions, run:

screen -r db-export

You’re back inside the session, and the script output is right there waiting. If you see There is no screen to be resumed matching db-export, double-check the name with:

screen -ls

Common mistake: Closing the terminal window with the session still attached instead of detaching first with Ctrl+A D. If you do that, the session shows as (Attached) in screen -ls and you’ll need screen -D -r db-export to force-detach and reattach.

Method 3: tmux (A Better Alternative to screen)

The tmux does everything screen does plus a lot more: split panes, multiple windows inside one session, scripted layouts, and a much cleaner configuration format. If you’re starting fresh and haven’t committed to screen, tmux is worth learning first.

By default, tmux is not installed on your system, so to install it, use the appropriate command for your Linux distribution.

sudo apt install tmux [On Debian, Ubuntu and Mint] sudo dnf install tmux [On RHEL/CentOS/Fedora and Rocky/AlmaLinux] sudo apk add tmux [On Alpine Linux] sudo pacman -S tmux [On Arch Linux] sudo zypper install tmux [On OpenSUSE] sudo pkg install tmux [On FreeBSD]

Start a Named Session

To start a new named session, run:

tmux new -s migration

Output:

[No output — you're now inside a tmux session named "migration"]

Next, run your command:

sudo mysqldump -u root -p production_db > /tmp/production-$(date +%F).sql

Detach Without Killing It

Press Ctrl+B, then D:

[detached (from session migration)]

List Sessions

To list running active sessions, run:

tmux ls

Output:

migration: 1 windows (created Fri May 1 09:14:22 2026) [220x50]

Reattach to a Session

To reattach running active sessions, run:

tmux attach -t migration

You’re back inside, exactly as you left it. If there’s only one session, tmux attach without the -t flag works fine.

Bonus: tmux lets you split the terminal into panes so you can run a job in one pane and watch a log file in another without opening two SSH sessions. Press Ctrl+B % to split vertically or Ctrl+B " to split horizontally.

tmux just changed how you think about remote work, send this to a colleague who’s still running everything in a single SSH window.Method 4: Keep Your Scripts Running with systemd

If you need a process that starts automatically on boot, restarts on failure, and runs independently of any user session, the right tool is a systemd service unit. This isn’t overkill for scripts you run regularly – it’s the proper way to manage long-running processes on modern Linux systems.

Here’s how to turn a script into a proper systemd service. Create a unit file:

sudo nano /etc/systemd/system/my-export.service

Add this content, replacing the paths and user with your own:

[Unit] Description=Database Export Job After=network.target [Service] Type=simple User=ravi ExecStart=/usr/bin/python3 /opt/scripts/export-database.py Restart=on-failure StandardOutput=journal StandardError=journal [Install] WantedBy=multi-user.target

Reload systemd so it picks up the new unit:

sudo systemctl daemon-reload

Next, start and enable the service:

sudo systemctl start my-export.service sudo systemctl enable my-export.service

Check that it’s running:

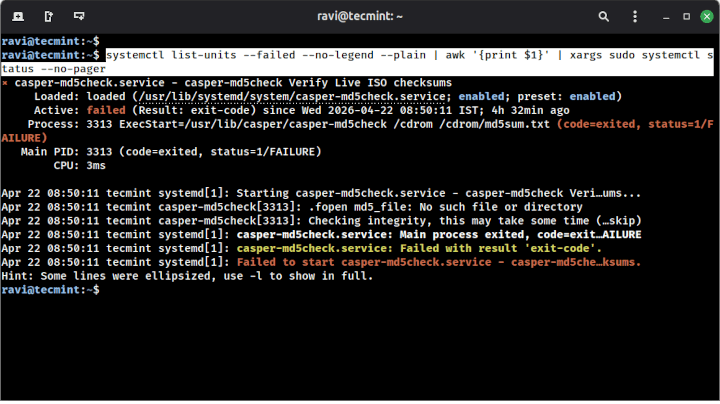

sudo systemctl status my-export.service

Output:

● my-export.service - Database Export Job

Loaded: loaded (/etc/systemd/system/my-export.service; disabled; preset: disabled)

Active: active (running) since Fri 2026-05-01 09:30:01 IST; 4s ago

Main PID: 51204 (python3)

Tasks: 1 (limit: 4915)

Memory: 18.4M

CPU: 211ms

CGroup: /system.slice/my-export.service

└─51204 /usr/bin/python3 /opt/scripts/export-database.py

Watch the live output:

sudo journalctl -u my-export.service -f

systemctl and journalctl end to end with real sysadmin examples.Choosing the Right Method

Here’s how to think about it in practice:

nohup: One command, one time, don’t need to watch it live.screenortmux: Interactive session you’ll reattach to later, or anything where you want to see live output.systemd: Script or service that needs to start on boot, restart on failure, or run independently of any logged-in user.

Most sysadmins use all 3, because each one fits a different situation. A one-off backup? nohup. A data migration you’re monitoring? tmux. A monitoring script that must always be running? systemd.

Conclusion

You now have 4 practical ways to keep processes running after you log out: nohup for quick background jobs, screen and tmux for reattachable interactive sessions, and systemd for anything that belongs as a proper managed service.

Right now, pick one long-running script or scheduled job on your server that you’ve been babysitting manually and move it into a tmux session or a systemd unit.

Start with tmux if you want something fast, or write the unit file if the job really should survive reboots. Both take under 5 minutes to set up once you know the commands.

Which of these methods do you already use, and which one finally made sense after reading this? Have you ever lost a critical job to a dropped SSH session and had to explain it to your team? Drop your story in the comments below.