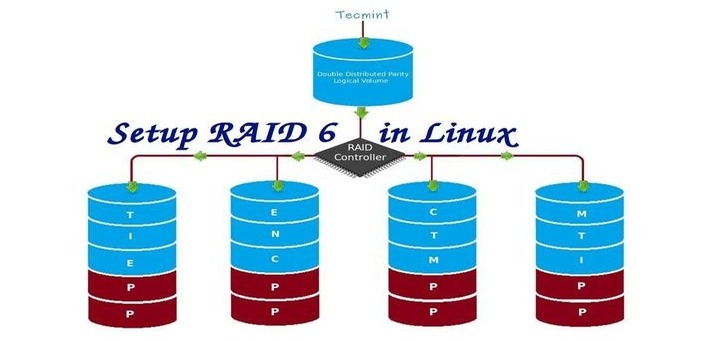

In RAID 5, data strips across multiple drives with distributed parity. The striping with distributed parity means it will split the parity information and stripe data over the multiple disks, which will have good data redundancy.

For RAID Level it should have at least three hard drives or more. RAID 5 is being used in the large-scale production environment where it’s cost-effective and provides performance as well as redundancy.

What is Parity?

Parity is the simplest common method of detecting errors in data storage. Parity stores information in each disk, Let’s say we have 4 disks, in 4 disks one disk space will be split into all disks to store the parity information. If any one of the disks fails still we can get the data by rebuilding from parity information after replacing the failed disk.

Pros and Cons of RAID 5

- Gives better performance

- Support Redundancy and Fault tolerance.

- Support hot spare options.

- Will lose a single disk capacity for using parity information.

- No data loss if a single disk fails. We can rebuild from parity after replacing the failed disk.

- Suits a transaction-oriented environment as the reading will be faster.

- Due to parity overhead, writing will be slow.

- Rebuild takes a long time.

Requirements

Minimum 3 hard drives are required to create Raid 5, but you can add more disks, only if you have a dedicated hardware raid controller with multi ports. Here, we are using software RAID and the ‘mdadm‘ package to create a raid.

mdadm is a package that allows us to configure and manage RAID devices in Linux. By default there is no configuration file is available for RAID, we must save the configuration file after creating and configuring the RAID setup in a separate file called mdadm.conf.

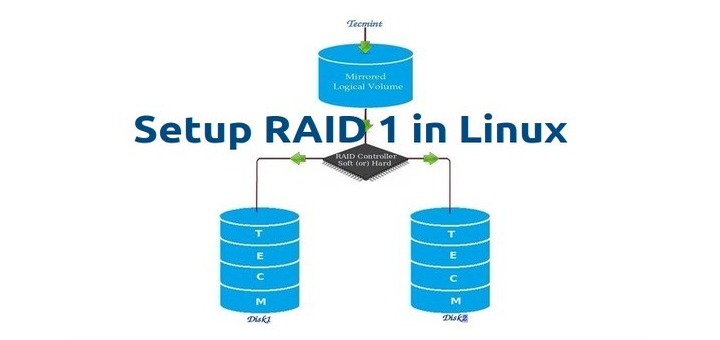

Before moving further, I suggest you go through the following articles for understanding the basics of RAID in Linux.

- Basic Concepts of RAID in Linux – Part 1

- Creating RAID 0 (Stripe) in Linux – Part 2

- Setting up RAID 1 (Mirroring) in Linux – Part 3

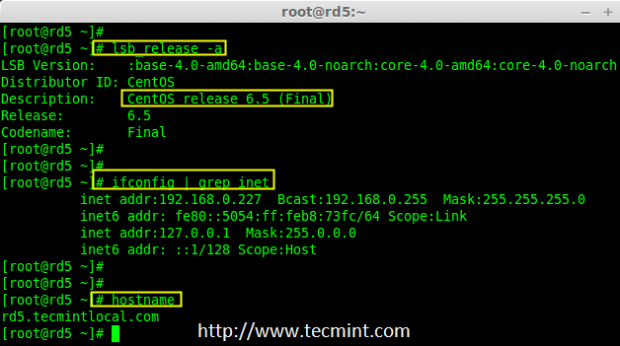

My Server Setup

Operating System : CentOS 6.5 Final IP Address : 192.168.0.227 Hostname : rd5.tecmintlocal.com Disk 1 [20GB] : /dev/sdb Disk 2 [20GB] : /dev/sdc Disk 3 [20GB] : /dev/sdd

This article is Part 4 of a 9-tutorial RAID series, here we are going to set up a software RAID 5 with distributed parity in Linux systems or servers using three 20GB disks named /dev/sdb, /dev/sdc, and /dev/sdd.

Step 1: Installing mdadm and Verify Drives

1. As we said earlier, that we’re using CentOS 6.5 Final release for this raid setup, but the same steps can be followed for RAID setup in any Linux-based distribution.

# lsb_release -a # ifconfig | grep inet

2. If you’re following our raid series, we assume that you’ve already installed the ‘mdadm‘ package, if not, use the following command according to your Linux distribution to install the package.

# yum install mdadm [on RedHat systems] # apt-get install mdadm [on Debain systems]

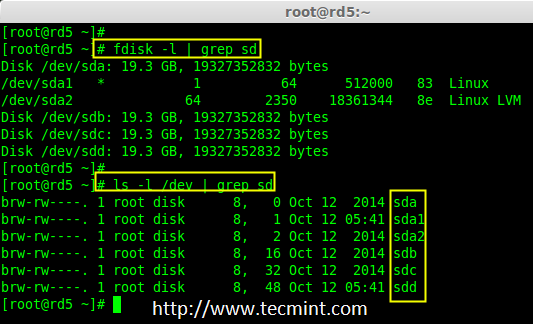

3. After the ‘mdadm‘ package installation, let’s list the three 20GB disks which we have added to our system using ‘fdisk‘ command.

# fdisk -l | grep sd

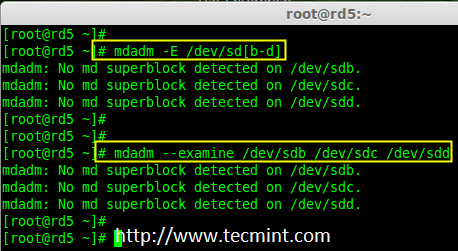

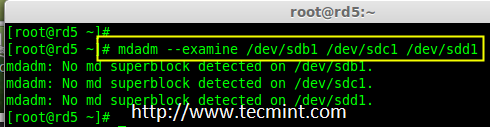

4. Now it’s time to examine the attached three drives for any existing RAID blocks on these drives using the following command.

# mdadm -E /dev/sd[b-d] # mdadm --examine /dev/sdb /dev/sdc /dev/sdd

Note: From the above image illustrated that there is no super-block detected yet. So, there is no RAID defined in all three drives. Let us start to create one now.

Step 2: Partitioning the Disks for RAID

5. First and foremost, we have to partition the disks (/dev/sdb, /dev/sdc, and /dev/sdd) before adding to a RAID, So let us define the partition using the ‘fdisk’ command, before forwarding it to the next steps.

# fdisk /dev/sdb # fdisk /dev/sdc # fdisk /dev/sdd

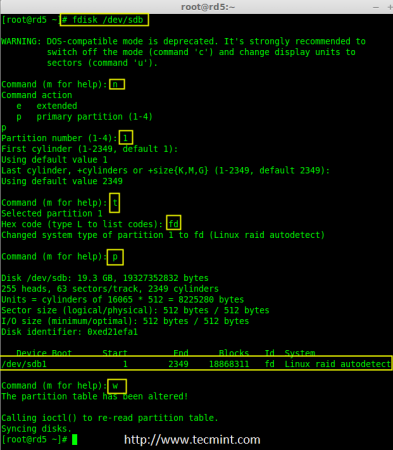

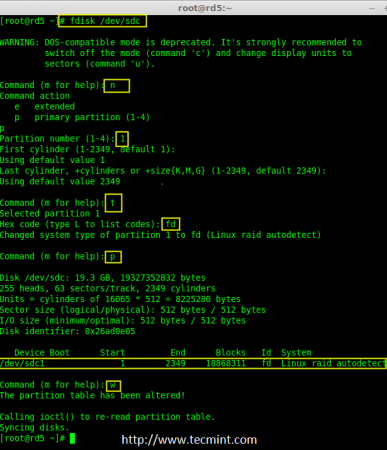

Create /dev/sdb Partition

Please follow the below instructions to create a partition on the /dev/sdb drive.

- Press ‘n‘ for creating a new partition.

- Then choose ‘P‘ for the Primary partition. Here we are choosing Primary because there are no partitions defined yet.

- Then choose ‘1‘ to be the first partition. By default, it will be 1.

- Here for cylinder size, we don’t have to choose the specified size because we need the whole partition for RAID so just Press Enter two times to choose the default full size.

- Next press ‘p‘ to print the created partition.

- Change the Type, If we need to know every available types Press ‘L‘.

- Here, we are selecting ‘fd‘ as my type is RAID.

- Next press ‘p‘ to print the defined partition.

- Then again use ‘p‘ to print the changes that we have made.

- Use ‘w‘ to write the changes.

Note: We have to follow the steps mentioned above to create partitions for sdc & sdd drives too.

Create /dev/sdc Partition

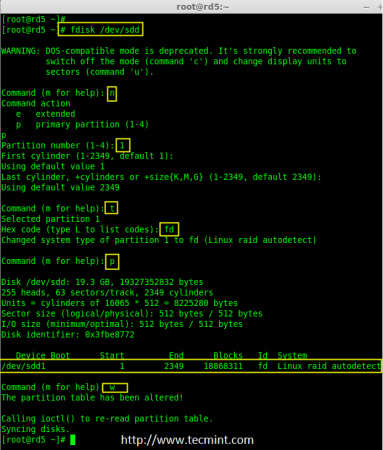

Now partition the sdc and sdd drives by following the steps given in the screenshot or you can follow the above steps.

# fdisk /dev/sdc

Create /dev/sdd Partition

# fdisk /dev/sdd

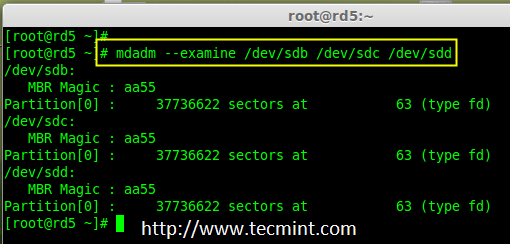

6. After creating partitions, check for changes in all three drives sdb, sdc, & sdd.

# mdadm --examine /dev/sdb /dev/sdc /dev/sdd or # mdadm -E /dev/sd[b-d]

Note: In the above pic. depict the type is fd i.e. for RAID.

7. Now Check for the RAID blocks in newly created partitions. If no super-blocks are detected then we can move forward to create a new RAID 5 setup on these drives.

Step 3: Creating md device md0

8. Now create a Raid device ‘md0‘ (i.e. /dev/md0) and include raid level on all newly created partitions (sdb1, sdc1, and sdd1) using the below command.

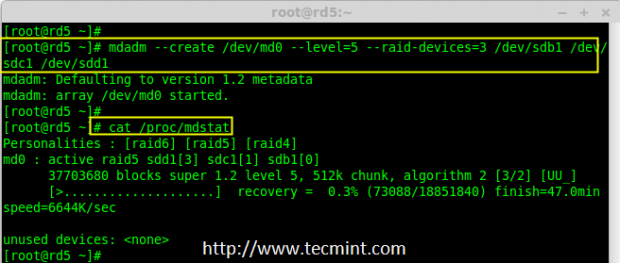

# mdadm --create /dev/md0 --level=5 --raid-devices=3 /dev/sdb1 /dev/sdc1 /dev/sdd1 or # mdadm -C /dev/md0 -l=5 -n=3 /dev/sd[b-d]1

9. After creating raid device, check and verify the RAID, devices included, and RAID Level from the mdstat output.

# cat /proc/mdstat

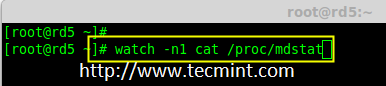

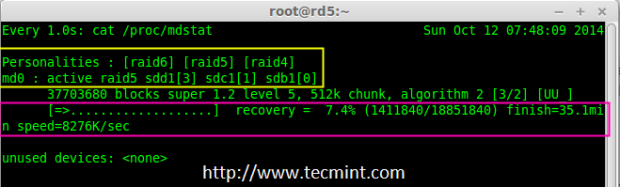

If you want to monitor the current building process, you can use the ‘watch‘ command, just pass through the ‘cat /proc/mdstat‘ with the watch command which will refresh the screen every 1 second.

# watch -n1 cat /proc/mdstat

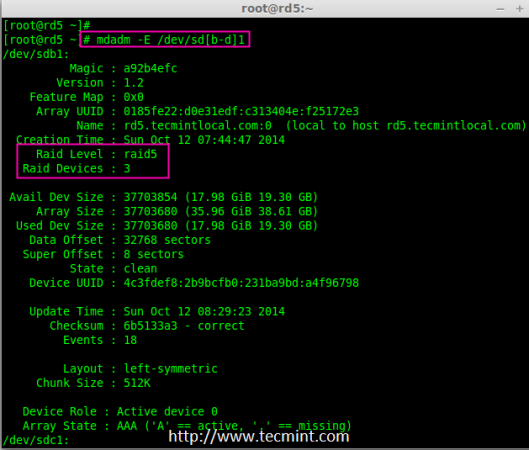

10. After the creation of the raid, Verify the raid devices using the following command.

# mdadm -E /dev/sd[b-d]1

Note: The Output of the above command will be a little long as it prints the information of all three drives.

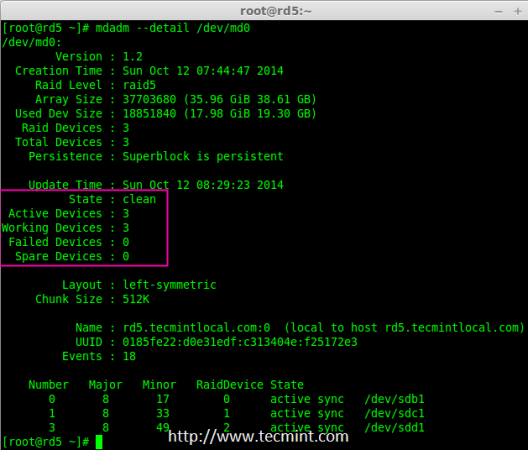

11. Next, verify the RAID array to assume that the devices which we’ve included in the RAID level are running and started to re-sync.

# mdadm --detail /dev/md0

Step 4: Creating file system for md0

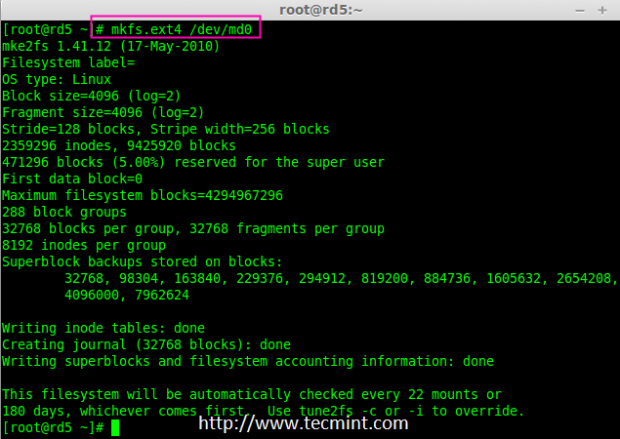

12. Create a file system for the ‘md0‘ device using ext4 before mounting.

# mkfs.ext4 /dev/md0

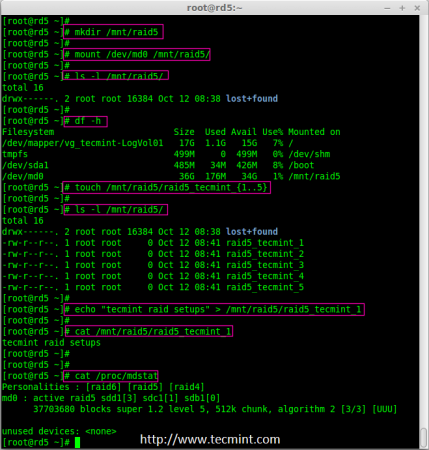

13. Now create a directory under ‘/mnt‘ then mount the created filesystem under /mnt/raid5 and check the files under mount point, you will see the lost+found directory.

# mkdir /mnt/raid5 # mount /dev/md0 /mnt/raid5/ # ls -l /mnt/raid5/

14. Create few files under mount point /mnt/raid5 and append some text in any one of the files to verify the content.

# touch /mnt/raid5/raid5_tecmint_{1..5}

# ls -l /mnt/raid5/

# echo "tecmint raid setups" > /mnt/raid5/raid5_tecmint_1

# cat /mnt/raid5/raid5_tecmint_1

# cat /proc/mdstat

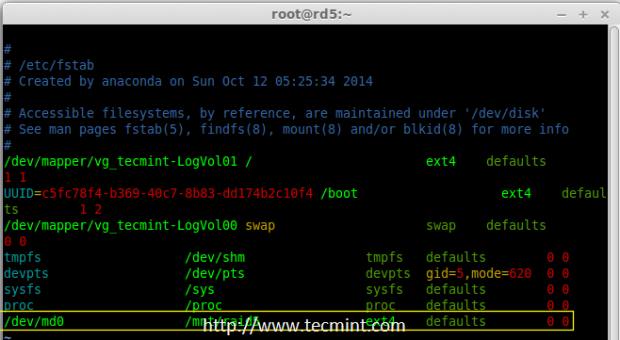

15. We need to add an entry in fstab, else will not display our mount point after system reboot. To add an entry, we should edit the fstab file and append the following line as shown below. The mount point will differ according to your environment.

# vim /etc/fstab /dev/md0 /mnt/raid5 ext4 defaults 0 0

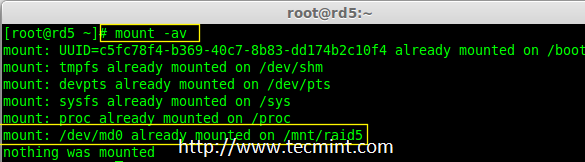

16. Next, run the ‘mount -av‘ command to check whether any errors in the fstab entry.

# mount -av

Step 5: Save Raid 5 Configuration

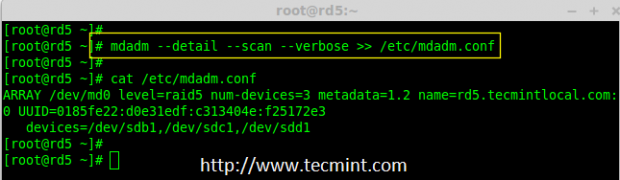

17. As mentioned earlier in the requirement section, by default RAID doesn’t have a config file. We have to save it manually. If this step is not followed RAID device will not be in md0, it will be in some other random number.

So, we must have to save the configuration before the system reboot. If the configuration is saved it will be loaded to the kernel during the system reboot and RAID will also get loaded.

# mdadm --detail --scan --verbose >> /etc/mdadm.conf

Note: Saving the configuration will keep the RAID level stable in the md0 device.

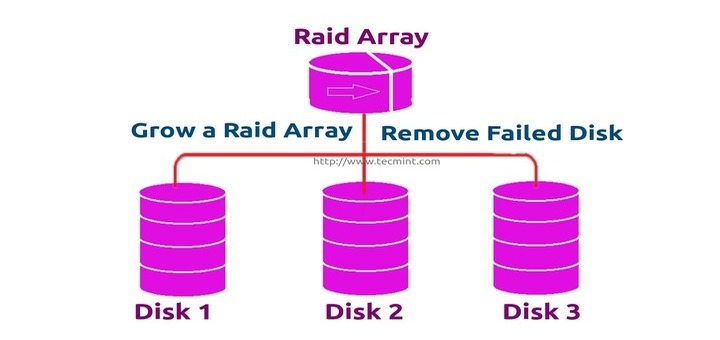

Step 6: Adding Spare Drives

18. What is the use of adding a spare drive? it is very useful if we have a spare drive, if any one of the disks fails in our array, this spare drive will get active and rebuild the process and sync the data from other disks, so we can see a redundancy here.

For more instructions on how to add spare drive and check Raid 5 fault tolerance, read #Step 6 and #Step 7 in the following article.

Conclusion

Here, in this article, we have seen how to set up a RAID 5 using three disks. Later in my upcoming articles, we will see how to troubleshoot when a disk fails in RAID 5 and how to replace it for recovery.

After following this guide my machine will now not boot.

I have to wipe the OS and start all over with my NAS setup.

If you are setting up on Debian 10, avoid this guide.

There are too many errors and differences in commands.

Hey, Creator of this tutorial…

Please correct your TYPOS!!!!!

in the comments and also on this site they needed to correct YOUR tutorial typos!!!!

CORRECT IT PLEASE as it is on the top search engines so noobs like me DONT HAVE TO INVESTIGATE FURTHER!!!!

On this page – https://www.tecmint.com/create-raid-5-in-linux/

You have multiple errors.

1. Change this error:

to this correction:

2. Change these argument syntax errors:

Sorry, your short command is wrong!

‘mdadm -C /dev/md0 -l=5 -n=3 /dev/sd[b-d]1’ <== wrong

'mdadm -C /dev/md0 -l 5 -n 3 /dev/sd[b-d]1' <== right!!! = is only for long version

to format in ext4:

/dev/md0is apparently in use by the system; will not make a filesystem here!At least in my 16.04.3 LTS Ubuntu (Xenial) – config files in /etc/mdadm/mdadm.conf – not sure if the references to /etc/mdadm/mdadm.conf are correct at different versions/distros. This appears a few places above.

Otherwise, this article was great and very helpful

Thank you

-Steven

I am using Debian 10 (buster) and i also show “/etc/mdadm/mdadm.conf” but even when i correct the command to run:

I get the following error:

mdadm: /etc/mdadm/mdadm.conf does not appear to be an md device

This guide has been very helpful and I’m so close to the finish line I just need help on this last step.

Can anyone guide me in the right direction?

Wouldn’t it be better to add the raid array to fstab via UUID?

did this on an Arch Linux setup and it worked flawlessly

that cat etc/mdadm.conf it always says no such file or directory

@ludvig,

You need to create the mdadm.conf manually using below command. This should be done before rebooting once you create your RAID setup.

# mdadm -E -s -v >> /etc/mdadm.conf

If you are using this setup with drives that are > 2TB you cannot use fdisk, you must use parted to create the partitions. Everything else can be the same, however.

You can use gdisk for partitions >2TB as well, and it has all the same flags as fdisk.

Read errors is the serious issue with RAID.

If the disk fails, the RAID will have to read all the disks that remain in the RAID group to rebuild.

A read error during the rebuild will cause data loss since we need to read all other disks during rebuild.

http://www.zdnet.com/article/why-raid-5-stops-working-in-2009/

SATA disks experience read failure once every 12,5 TB of read operations, that means if the data on the surviving disks totals 12.5TB, the rebuild is certain to fail.

http://www.lucidti.com/zfs-checksums-add-reliability-to-nas-storage