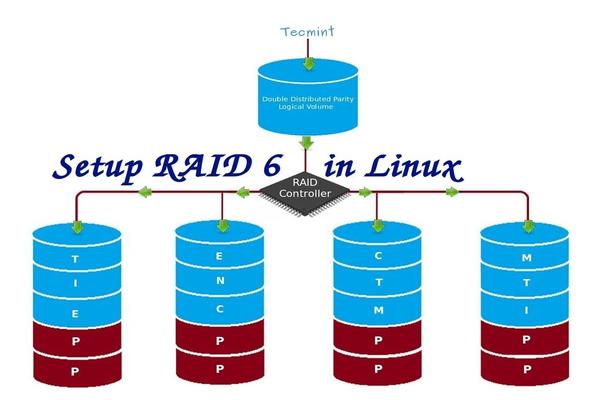

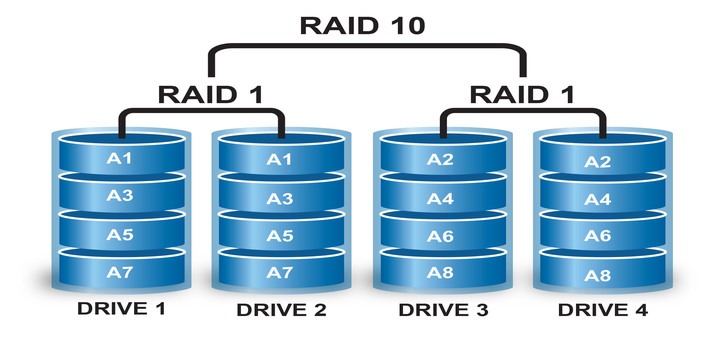

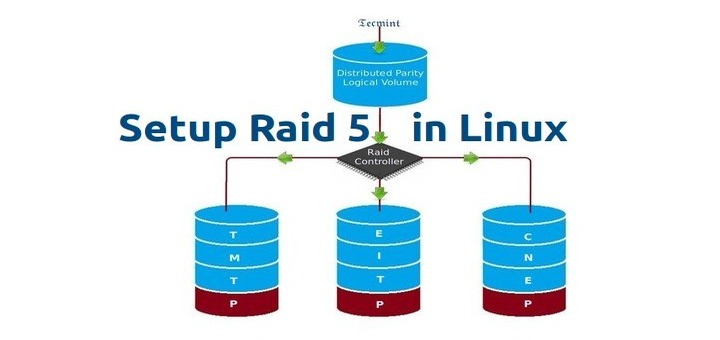

RAID 6 is upgraded version of RAID 5, where it has two distributed parity which provides fault tolerance even after two drives fails. Mission critical system still operational incase of two concurrent disks failures. It’s alike RAID 5, but provides more robust, because it uses one more disk for parity.

In our earlier article, we’ve seen distributed parity in RAID 5, but in this article we will going to see RAID 6 with double distributed parity. Don’t expect extra performance than any other RAID, if so we have to install a dedicated RAID Controller too. Here in RAID 6 even if we loose our 2 disks we can get the data back by replacing a spare drive and build it from parity.

To setup a RAID 6, minimum 4 numbers of disks or more in a set are required. RAID 6 have multiple disks even in some set it may be have some bunch of disks, while reading, it will read from all the drives, so reading would be faster whereas writing would be poor because it has to stripe over multiple disks.

Now, many of us comes to conclusion, why we need to use RAID 6, when it doesn’t perform like any other RAID. Hmm… those who raise this question need to know that, if they need high fault tolerance choose RAID 6. In every higher environments with high availability for database, they use RAID 6 because database is the most important and need to be safe in any cost, also it can be useful for video streaming environments.

Pros and Cons of RAID 6

- Performance are good.

- RAID 6 is expensive, as it requires two independent drives are used for parity functions.

- Will loose a two disks capacity for using parity information (double parity).

- No data loss, even after two disk fails. We can rebuilt from parity after replacing the failed disk.

- Reading will be better than RAID 5, because it reads from multiple disk, But writing performance will be very poor without dedicated RAID Controller.

Requirements

Minimum 4 numbers of disks are required to create a RAID 6. If you want to add more disks, you can, but you must have dedicated raid controller. In software RAID, we will won’t get better performance in RAID 6. So we need a physical RAID controller.

Those who are new to RAID setup, we recommend to go through RAID articles below.

- Basic Concepts of RAID in Linux – Part 1

- Creating Software RAID 0 (Stripe) in Linux – Part 2

- Setting up RAID 1 (Mirroring) in Linux – Part 3

My Server Setup

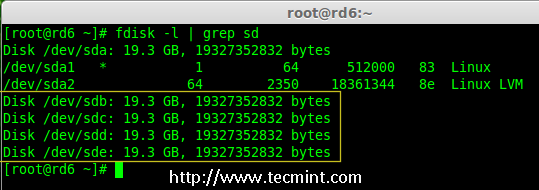

Operating System : CentOS 6.5 Final IP Address : 192.168.0.228 Hostname : rd6.tecmintlocal.com Disk 1 [20GB] : /dev/sdb Disk 2 [20GB] : /dev/sdc Disk 3 [20GB] : /dev/sdd Disk 4 [20GB] : /dev/sde

This article is a Part 5 of a 9-tutorial RAID series, here we are going to see how we can create and setup Software RAID 6 or Striping with Double Distributed Parity in Linux systems or servers using four 20GB disks named /dev/sdb, /dev/sdc, /dev/sdd and /dev/sde.

Step 1: Installing mdadm Tool and Examine Drives

1. If you’re following our last two Raid articles (Part 2 and Part 3), where we’ve already shown how to install ‘mdadm‘ tool. If you’re new to this article, let me explain that ‘mdadm‘ is a tool to create and manage Raid in Linux systems, let’s install the tool using following command according to your Linux distribution.

# yum install mdadm [on RedHat systems] # apt-get install mdadm [on Debain systems]

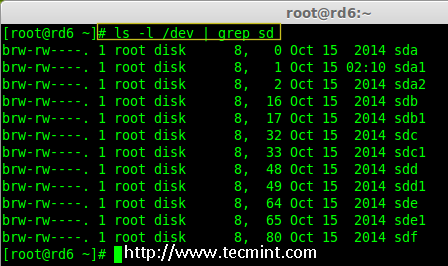

2. After installing the tool, now it’s time to verify the attached four drives that we are going to use for raid creation using the following ‘fdisk‘ command.

# fdisk -l | grep sd

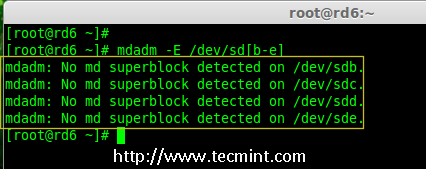

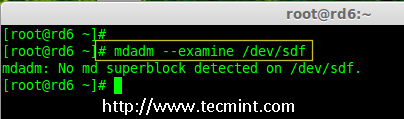

3. Before creating a RAID drives, always examine our disk drives whether there is any RAID is already created on the disks.

# mdadm -E /dev/sd[b-e] # mdadm --examine /dev/sdb /dev/sdc /dev/sdd /dev/sde

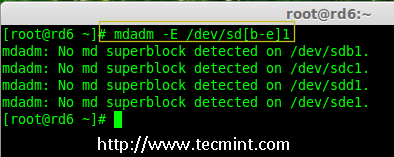

Note: In the above image depicts that there is no any super-block detected or no RAID is defined in four disk drives. We may move further to start creating RAID 6.

Step 2: Drive Partitioning for RAID 6

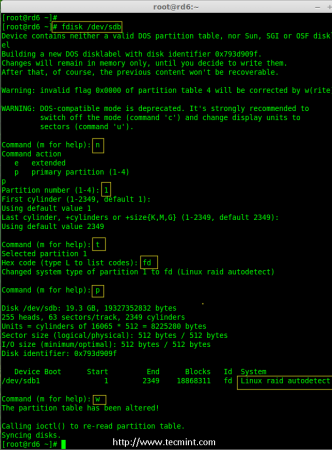

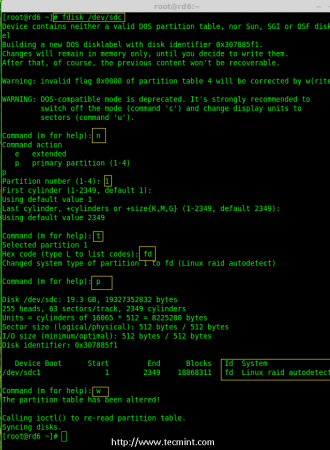

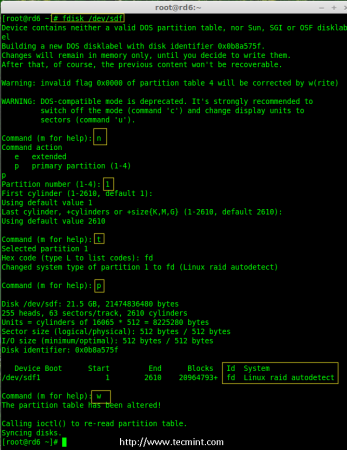

4. Now create partitions for raid on ‘/dev/sdb‘, ‘/dev/sdc‘, ‘/dev/sdd‘ and ‘/dev/sde‘ with the help of following fdisk command. Here, we will show how to create partition on sdb drive and later same steps to be followed for rest of the drives.

Create /dev/sdb Partition

# fdisk /dev/sdb

Please follow the instructions as shown below for creating partition.

- Press ‘n‘ for creating new partition.

- Then choose ‘P‘ for Primary partition.

- Next choose the partition number as 1.

- Define the default value by just pressing two times Enter key.

- Next press ‘P‘ to print the defined partition.

- Press ‘L‘ to list all available types.

- Type ‘t‘ to choose the partitions.

- Choose ‘fd‘ for Linux raid auto and press Enter to apply.

- Then again use ‘P‘ to print the changes what we have made.

- Use ‘w‘ to write the changes.

Create /dev/sdb Partition

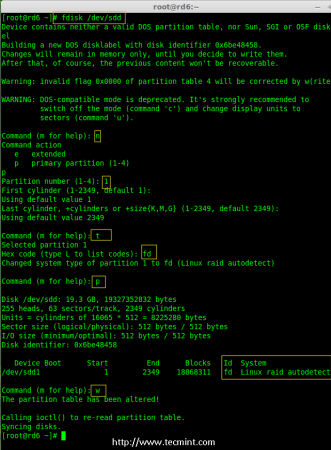

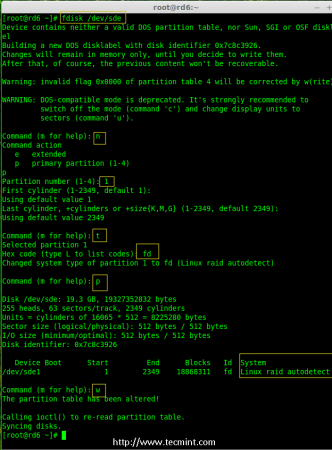

# fdisk /dev/sdc

Create /dev/sdd Partition

# fdisk /dev/sdd

Create /dev/sde Partition

# fdisk /dev/sde

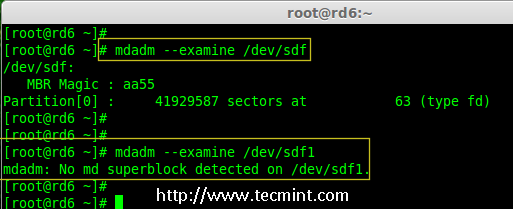

5. After creating partitions, it’s always good habit to examine the drives for super-blocks. If super-blocks does not exist than we can go head to create a new RAID setup.

# mdadm -E /dev/sd[b-e]1 or # mdadm --examine /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1

Step 3: Creating md device (RAID)

6. Now it’s time to create Raid device ‘md0‘ (i.e. /dev/md0) and apply raid level on all newly created partitions and confirm the raid using following commands.

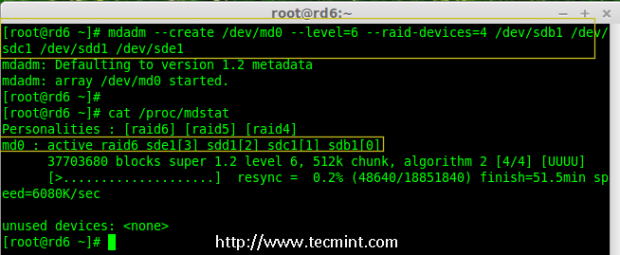

# mdadm --create /dev/md0 --level=6 --raid-devices=4 /dev/sdb1 /dev/sdc1 /dev/sdd1 /dev/sde1 # cat /proc/mdstat

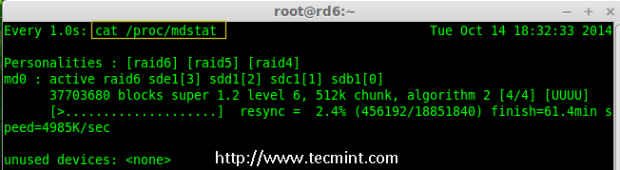

7. You can also check the current process of raid using watch command as shown in the screen grab below.

# watch -n1 cat /proc/mdstat

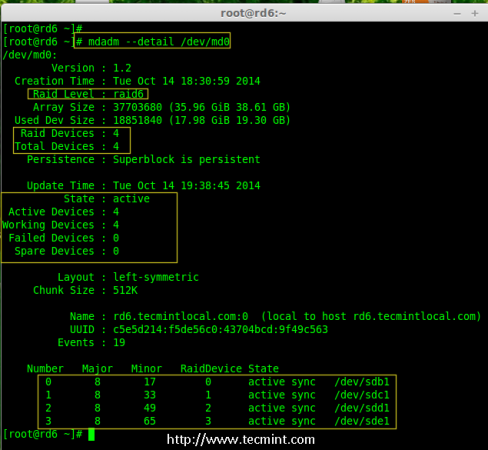

8. Verify the raid devices using the following command.

# mdadm -E /dev/sd[b-e]1

Note:: The above command will be display the information of the four disks, which is quite long so not possible to post the output or screen grab here.

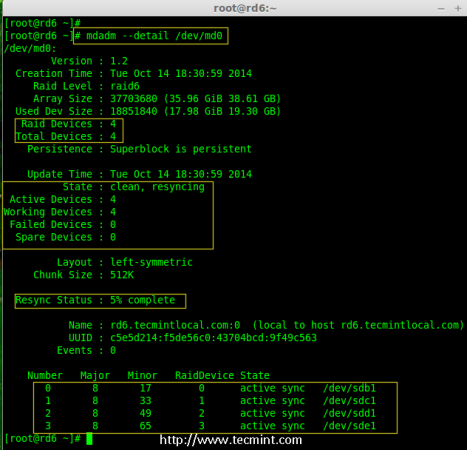

9. Next, verify the RAID array to confirm that the re-syncing is started.

# mdadm --detail /dev/md0

Step 4: Creating FileSystem on Raid Device

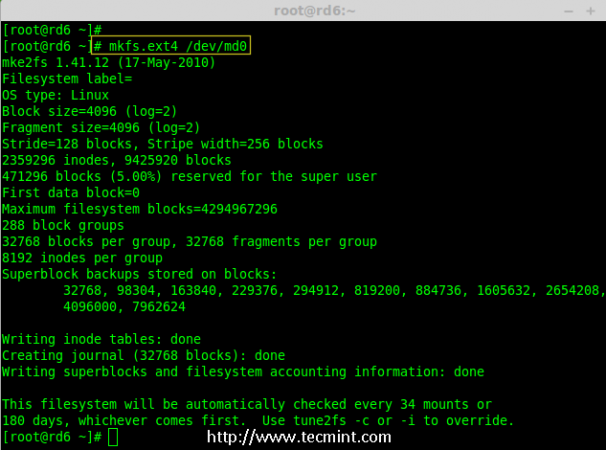

10. Create a filesystem using ext4 for ‘/dev/md0‘ and mount it under /mnt/raid6. Here we’ve used ext4, but you can use any type of filesystem as per your choice.

# mkfs.ext4 /dev/md0

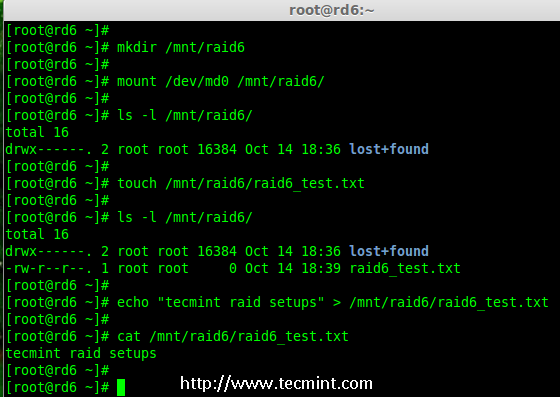

11. Mount the created filesystem under /mnt/raid6 and verify the files under mount point, we can see lost+found directory.

# mkdir /mnt/raid6 # mount /dev/md0 /mnt/raid6/ # ls -l /mnt/raid6/

12. Create some files under mount point and append some text in any one of the file to verify the content.

# touch /mnt/raid6/raid6_test.txt # ls -l /mnt/raid6/ # echo "tecmint raid setups" > /mnt/raid6/raid6_test.txt # cat /mnt/raid6/raid6_test.txt

13. Add an entry in /etc/fstab to auto mount the device at the system startup and append the below entry, mount point may differ according to your environment.

# vim /etc/fstab /dev/md0 /mnt/raid6 ext4 defaults 0 0

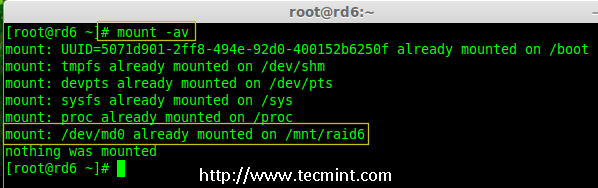

14. Next, execute ‘mount -a‘ command to verify whether there is any error in fstab entry.

# mount -av

Step 5: Save RAID 6 Configuration

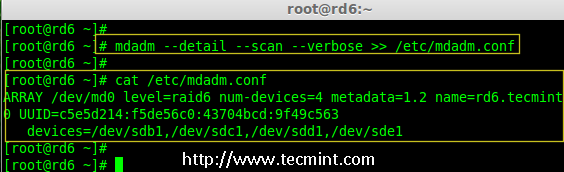

15. Please note by default RAID don’t have a config file. We have to save it by manually using below command and then verify the status of device ‘/dev/md0‘.

# mdadm --detail --scan --verbose >> /etc/mdadm.conf # mdadm --detail /dev/md0

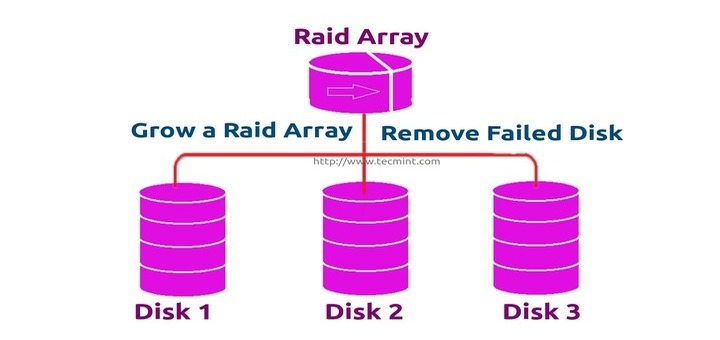

Step 6: Adding a Spare Drives

16. Now it has 4 disks and there are two parity information’s available. In some cases, if any one of the disk fails we can get the data, because there is double parity in RAID 6.

May be if the second disk fails, we can add a new one before loosing third disk. It is possible to add a spare drive while creating our RAID set, But I have not defined the spare drive while creating our raid set. But, we can add a spare drive after any drive failure or while creating the RAID set. Now we have already created the RAID set now let me add a spare drive for demonstration.

For the demonstration purpose, I’ve hot-plugged a new HDD disk (i.e. /dev/sdf), let’s verify the attached disk.

# ls -l /dev/ | grep sd

17. Now again confirm the new attached disk for any raid is already configured or not using the same mdadm command.

# mdadm --examine /dev/sdf

Note: As usual, like we’ve created partitions for four disks earlier, similarly we’ve to create new partition on the new plugged disk using fdisk command.

# fdisk /dev/sdf

18. Again after creating new partition on /dev/sdf, confirm the raid on the partition, include the spare drive to the /dev/md0 raid device and verify the added device.

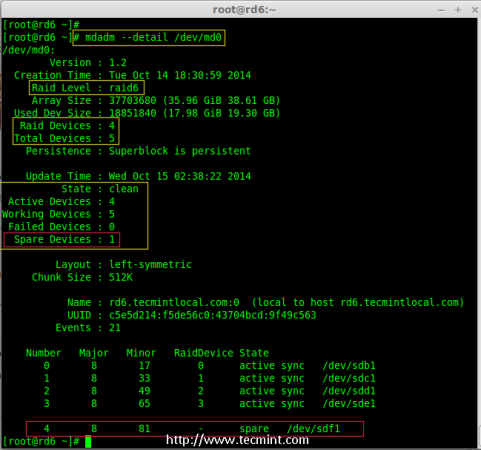

# mdadm --examine /dev/sdf # mdadm --examine /dev/sdf1 # mdadm --add /dev/md0 /dev/sdf1 # mdadm --detail /dev/md0

Step 7: Check Raid 6 Fault Tolerance

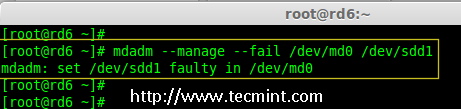

19. Now, let us check whether spare drive works automatically, if anyone of the disk fails in our Array. For testing, I’ve personally marked one of the drive is failed.

Here, we’re going to mark /dev/sdd1 as failed drive.

# mdadm --manage --fail /dev/md0 /dev/sdd1

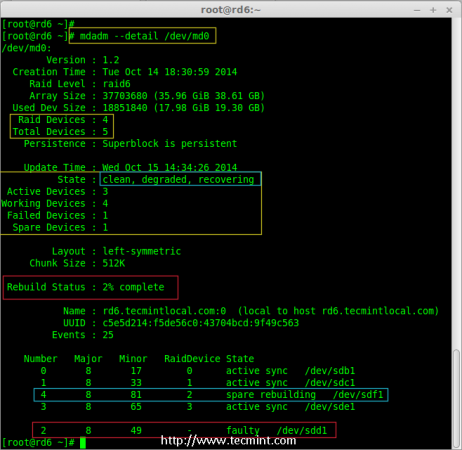

20. Let me get the details of RAID set now and check whether our spare started to sync.

# mdadm --detail /dev/md0

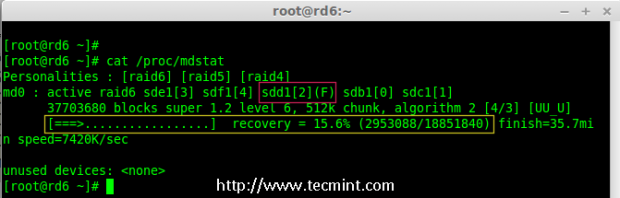

Hurray! Here, we can see the spare got activated and started rebuilding process. At the bottom we can see the faulty drive /dev/sdd1 listed as faulty. We can monitor build process using following command.

# cat /proc/mdstat

Conclusion:

Here, we have seen how to setup RAID 6 using four disks. This RAID level is one of the expensive setup with high redundancy. We will see how to setup a Nested RAID 10 and much more in the next articles. Till then, stay connected with TECMINT.

My RAID 6 always renames itself to

/dev/md127after rebooting. Do you know why it’s happening?Hi all great articles, before sorry about my English…

I had a Lacie 5Big network 2 with 5 2tb hdd of it disk 2 and 3 are missing from raid 6, so the Lacie never boot again.. I cloned the 3 rest disks mount it on Ubuntu virtual machine, I need to emulate the raid 6 to recover my information please..

I had installed mdadm software but i don’t know where start

There is a small typo in this article. “and mount it under /mnt/raid5” should be “and mount it under /mnt/raid6”.

Irrespective – very informative! Thanks.

@Nige,

Thanks for informing us, we’ve corrected the command in the article..

I have 8 old hdds varying from 80gb to 250gb in size.i want to create a redundant disk array using these disks and want to share it in lan so that others can store there file and thus make use of old hdds.have Dell precision t 3500 with win 7 have built in raid controller.can u help me to start this

Thank you for the very well documented article.

1) My raid would disappear after each reboot. md0 would be gone. My theory is because of this command “mdadm –detail –scan –verbose >> /etc/mdadm.conf”

When I changed location to /etc/mdadm/mdadm.conf then I think the raid survived reboot (this is an unconfirmed theory)

2) Here are suggested commands to use “parted’ for drives greater than 2TB.

sudo parted -a optimal /dev/sda

(parted) mklabel gpt

(parted) mkpart primary 1 -1 (or ==> mkpart primary 1MiB 512MiB ???)

(parted) align-check

alignment type(min/opt) [optimal]/minimal? optimal

Partition number? 1

(parted) set 1 raid on

(parted) quit

Thanks again for an excellent article

Hi ravi,

I am using raid5(3disk – 600GB each) in LVM FS, so i get 1.8T LVM partition. Now I have to add new disk – 1TB in LVM. Do we have to add it in Raid or can we attach it directly and convert it into pv then extend vg..

what could be the possible ways ?

@Mike,

You should follow below procedure for extending your space..

1. Add a new drive

2. Use mdadm –grow to add it to the RAID5

3. pvresize to increase the size of the PV

4. lvextend to increase the size of the LV

5. resize2fs to grow the filesystem

But how can i Up again that /dev/sdd1 partition ???

We need to remove the failed disk and replace with new one.

Sorry but is it possible to explain some details of removing the failed disk & replacing with new one?..

or would you leave some links about it ..

I don’t know how to ㅠ

What about Raid 5 that Ravi sir has promised to be published in Friday but haven’t. Is waiting for this.

@Debasish,

Sorry for delay, the raid 5 article is in progress, will soon publish it…may be Tomorrow or Friday..

I think this Tut should be Part 4…….

No, actually RAID 5 setup is the part 4 article, but we by mistakenly posted part 5. Part 4 will be published on Friday..