Apache Kafka is a powerful messaging engine, which is widely used in BigData projects and the Data Analytics life cycle. It is an Open-source platform to build real-time data streaming pipelines. It is a distributed publish-subscribe platform with Reliability, Scalability, and Durability.

We can have Kafka as a standalone or as a cluster. Kafka stores the streaming data, and it can be categorized as Topics. The Topic will be having a number of partitions so that it can handle an arbitrary amount of data. Also, we can have multiple replicas for fault-tolerant as we are having in HDFS. In a Kafka cluster, the broker is a component that stores the published data.

Zookeeper is a mandatory service to run a Kafka cluster, as it is used for managing the co-ordinating of the Kafka brokers. Zookeeper plays a key role between producer and consumer where it is responsible for maintaining the state of all brokers.

In this article, we will explain how to install Apache Kafka in a single node CentOS 7 or RHEL 7.

Installing Apache Kafka in CentOS 7

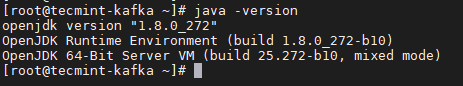

1. First, you need to install Java on your system to run Apache Kafka without any errors. So, install the default available version of Java using the following yum command and verify the Java version as shown.

# yum -y install java-1.8.0-openjdk # java -version

2. Next, download the most recent stable version of Apache Kafka from the official website or use the following wget command to download it directly and extract it.

# wget https://mirrors.estointernet.in/apache/kafka/2.7.0/kafka_2.13-2.7.0.tgz # tar -xzf kafka_2.13-2.7.0.tgz

3. Create a symbolic link for kafka package, then add Kafka environment path to .bash_profile file and then initialize it as shown.

# ln -s kafka_2.13-2.7.0 kafka # echo "export PATH=$PATH:/root/kafka_2.13-2.7.0/bin" >> ~/.bash_profile # source ~/.bash_profile

4. Next, start the Zookeeper, which comes built-in with the Kafka package. Since it is a single node cluster, you can start the zookeeper with default properties.

# zookeeper-server-start.sh -daemon /root/kafka/config/zookeeper.properties

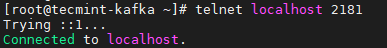

5. Validate whether the zookeeper is accessible or not by simply telnet to Zookeeper port 2181.

# telnet localhost 2181

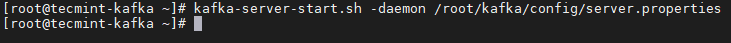

6. Start the Kafka with its default properties.

# kafka-server-start.sh -daemon /root/kafka/config/server.properties

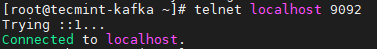

7. Validate whether the Kafka is accessible or not by simply telnet to Kafka port 9092

# telnet localhost 9092

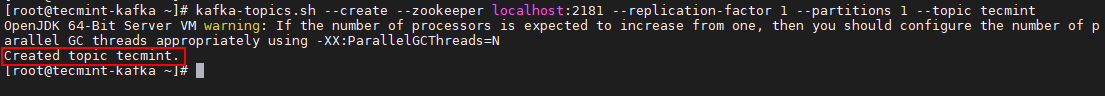

8. Next, create a sample topic.

# kafka-topics.sh --create --zookeeper localhost:2181 --replication-factor 1 --partitions 1 --topic tecmint

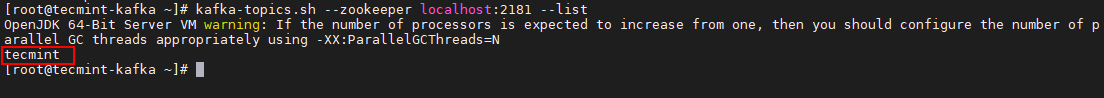

9. List out the topic created.

# kafka-topics.sh --zookeeper localhost:2181 --list

Conclusion

In this article, we have seen how to install a Single node Kafka cluster in CentOS 7. We will see how to install a multinode Kafka Cluster in the next article.